Cloud Networking

Introduction

Manhattan Active® Platform is deployed as a distributed application using Google Kubernetes Engine on Google Cloud Platform. When we use Kubernetes Engine to orchestrate the Manhattan Active applications, it’s important to think about the network design of the applications and their hosts.Networking design is critical for architecting the infrastructure because it helps to optimize for performance and secure application communications with internal and external services.

Below are the key networking components we designed and implemented for Manhattan Active® Platform.

VPC

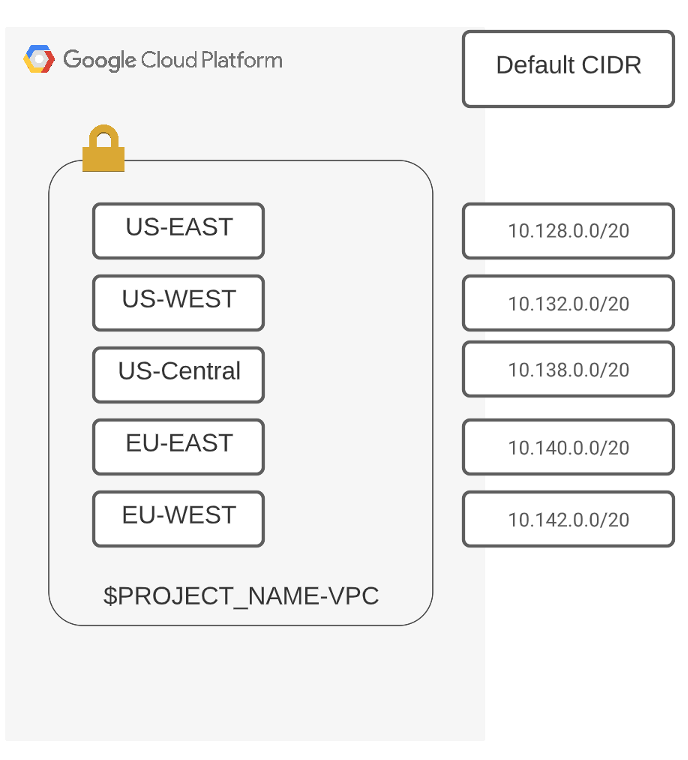

Virtual Private cloud (VPC) is the global private isolated virtual network partition that provides managed networking functionality for each kubernetes cluster. It is the fundamental networking resource created before we deploy the kubernetes cluster. When we create the Google project, a dedicated automode VPC is created, and VPC for each customer environment are unique and independent to restrict access from one environment into another. The network firewall and ACL rules for each environment forbid network-level access across environments. As the VPC mode is auto, automatic subnets are created on the Google region and the kubernetes cluster will use those subnets for creating the cluster nodes.

VPC Flow Logs have enabled for all the VPC, and enabling flow logs records a sample of network flows sent from and received by instances used as Google Kubernetes Engine nodes. These logs can be used for network monitoring, forensics, real-time security analysis, and expense optimization.

Planning the GKE IP address

GKE clusters require a unique IP address for every Pod. In GKE, all these addresses can be routed throughout the VPC network. Therefore, IP address planning is necessary because addresses cannot overlap with private IP address space used on-premises or in other connected environments. The following sections suggest strategies for IP address management with GKE.

- Our cluster type is private, and each node has an IP address assigned from the cluster’s Virtual Private Cloud (VPC) network.

- /24 is the default CIDR block assigned for each cluster node to assign the GKE pods.

- RFC 1918 Ip address range is used for the GKE pods and services.

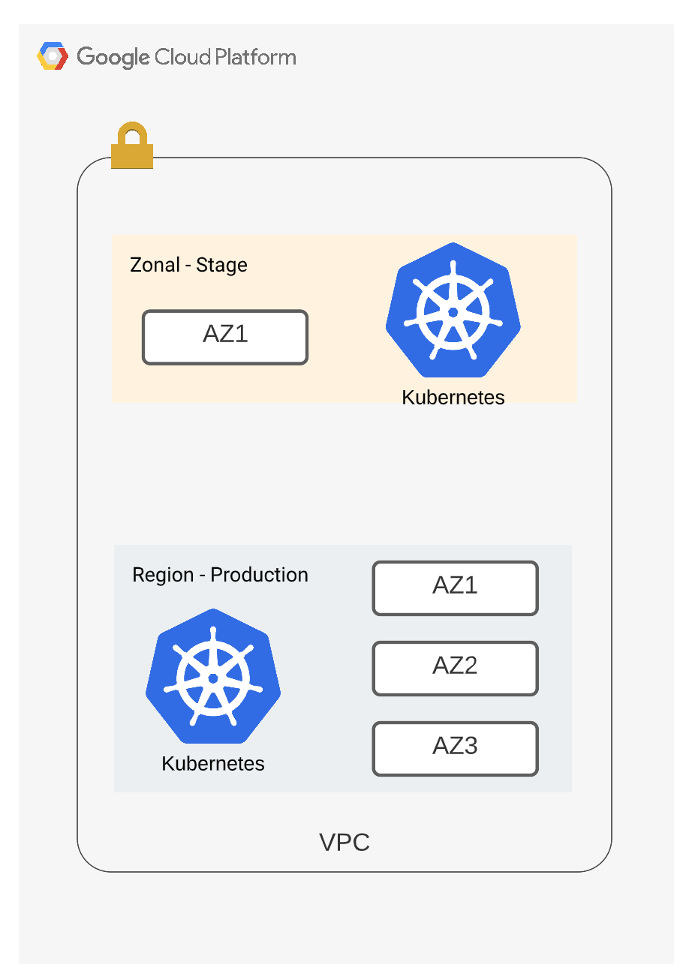

GKE cluster

Kubernetes clusters created for the Manhattan Active® Platform are private by default. Private clusters use nodes that do not have external IP addresses. This means that clients on the internet cannot connect to the IP addresses of the nodes. It reduces the attack surface and the risk of compromising the workloads. The Master node, which is hosted on a Google-Managed project, communicates with the nodes via VPC peering. The public endpoint for the GKE control plane is not exposed to the outside world, and we have enabled authorized networks by only allowing the secure networks.

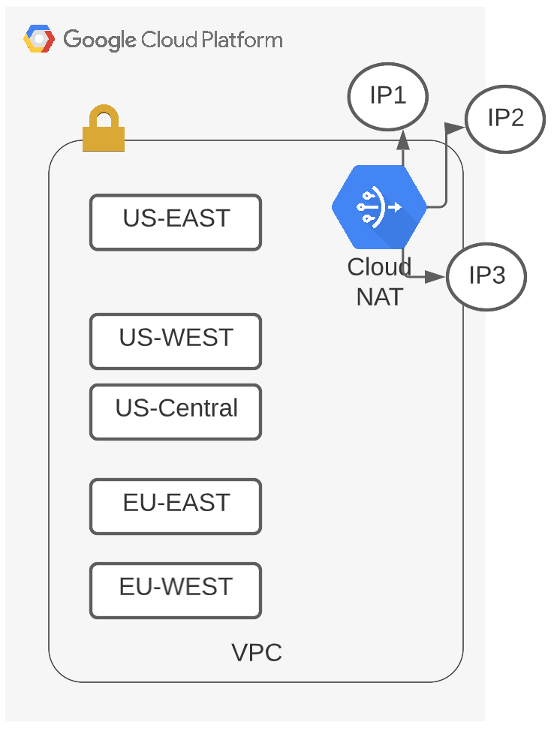

Cloud NAT

Private GKE Clusters don’t have any external IP addresses, and all the nodes instances get internal ip addresses by default. Pods running on these node instances can’t access the internet. We are configuring Cloud NAT service to allow private Google Kubernetes Engine (GKE) clusters to connect to the Internet.

Cloud NAT implements outbound NAT (i.e. network translation, mapping internal IP addresses to external IP) to allow instances to reach the Internet. As part of Cloud NAT configuration, we can manually reserve a set of public IP address which would create and release IP based on workloads.

When we integrate the Active Omni or Active supply chain with other third party applications via xbound, which employs IP Whitelisting as part of the access mechanism, we can share the ip addresses of the Cloud Nat instead of sharing the Xbound node pool ip address.

Private Google Access

We have also enabled private Google access by default on the VPC where the GKE cluster has deployed. Private Google Access provides private nodes and their workloads access to Google Cloud APIs and services over Google’s private network.

For example, in all customer environments, Private Google Access is used by clusters to access most of the Google apis.

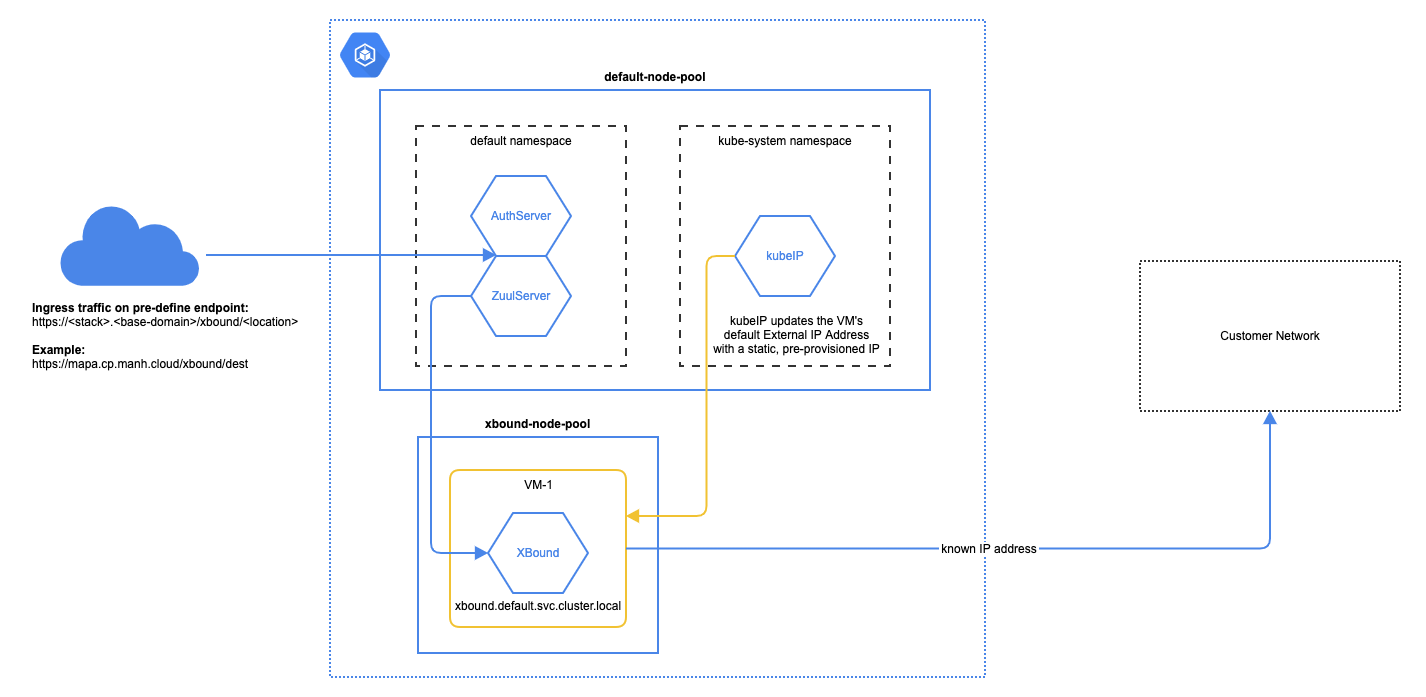

External communication using Xbound

XBound is a Java, Spring Boot component that wraps Netflix OSS Zuul library to provide an outbound proxy, or router that can forward the incoming HTTP(s) calls to configured external locations. The diagram below explains how the invocation sequence looks:

As shown above, the base (and the custom) components invoke the XBound endpoints, and based on the mapping configuration in XBound, the calls would get routed to the external endpoints. In other words, XBound can also be seen as a “NAT†gateway into the outer world, but with more flexibility and control than a standard NAT configuration that the IaaS providers have.

XBound can run as one or more containers similar to the application components. However, XBound is always configured to run on a separate, exclusive node pool (called xbound-pool). This node pool has special configuration in place to automatically assign static public IP addresses. Having static public IP addresses allows for a possibility of the external location requiring an IP address restriction (such as a firewall allow-list). Do note, that the nodes on which the components run (the components-pool) do not have static IP addresses

Route Configuration XBound uses Spring properties to define the routes using the Zuul routing syntax. See the example below:

zuul.routes.example.path: /example/**

zuul.routes.example.url: https://example.com

In this simple example, calls made to XBound on path /example/... will get routed to the target https://example.com/... . The ... designates the remainder of the path as is. For example:

curl -s com-manh-cp-xbound:8080/example/foo/bar

…will result in the same call being forwarded to…

https://example.com/foo/bar

Here’s another example to better explain the concept (real example that can be tested):

Properties in XBound:

---------------------

zuul.routes.ifconfig.path: /ifconfig/**

zuul.routes.ifconfig.url: https://ifconfig.co

Invocation to XBound (presumably from a RestTemplate in the component code):

----------------------------------------------------------------------------

curl -s com-manh-cp-xbound:8080/ifconfig

Invocation is routed to:

------------------------

https://ifconfig.co

If the invocation of XBound is changed:

---------------------------------------

curl -s com-manh-cp-xbound:8080/ifconfig/ip

Then the target is routed to:

-----------------------------

https://ifconfig.co/ip

HTTP Authentication method for xbound

For HTTP(s) calls that originate from the application components, but reach the target external endpoint without any HTTP(s) authentication mechanism, nothing additional needs to be configured. However, if HTTP(s) authentication is required, then depending on the type of authentication requirement, additional configuration may be needed:

Basic auth: No additional configuration required. The Authorization: Basic header with the base64 encoded credentials is passed as is to the forwarded invocation. In other words, the external endpoint will receive the authorization header as is.

OAuth token: No additional configuration required. The Authorization: Bearer header with the encrypted access token is passed as is to the forwarded invocation. In other words, the external endpoint will receive the authorization header as is.

Certificate based auth: The client cert configuration is supported by XBound. However, this feature is supported only for custom extension requirements, and not for base calls. The reason for this restriction because the client certificates are typically customer specific versus the base application code will need to be client-agnostic.

Edge Service

An edge service is a service that provides an entry point into an enterprise or the network of a service provider. Edge services can be as simple as a stateless router or a switch and as complex as a full-fledged web application that addresses not only routing but also other concerns such as security, authorization, throttling etc.

Edge services typically address one or more of the following concerns

- Network security

- User authentication and authorization

- Network protocol translation

- Payload transformation and/or translation

- Rate limiting and Throttling

- Resiliency

- Performance

Why do we need edge services?

We need edge services for our Active Supply Chain solutions to:

- access the devices (i.e. printers, MHE, RF and touch devices, etc.) on the customer’s facility and on the customer’s network

- translate between network protocols (i.e. TCP, HTTPS, etc.)

- provide a single way to interact with the Active Supply Chain solutions on the cloud

- ensure that customer’s applications and devices contact the Active Supply Chain solutions with adequate security and access controls in place

- improve user experience

Edge service components

These components should:

- communicate with Manhattan Active Supply Chain components (within Manhattan’s VPC) through the public load balancer/gateway using HTTPS protocol

- not contain any configuration data and/or should not serve any UIs or HTTPS services for end users

- communicate internal state and/or transient events to an auditing system hosted on Manhattan’s VPC

- log application logs to an external logging system like StackDriver logging on the Google Cloud Platform (GCP)

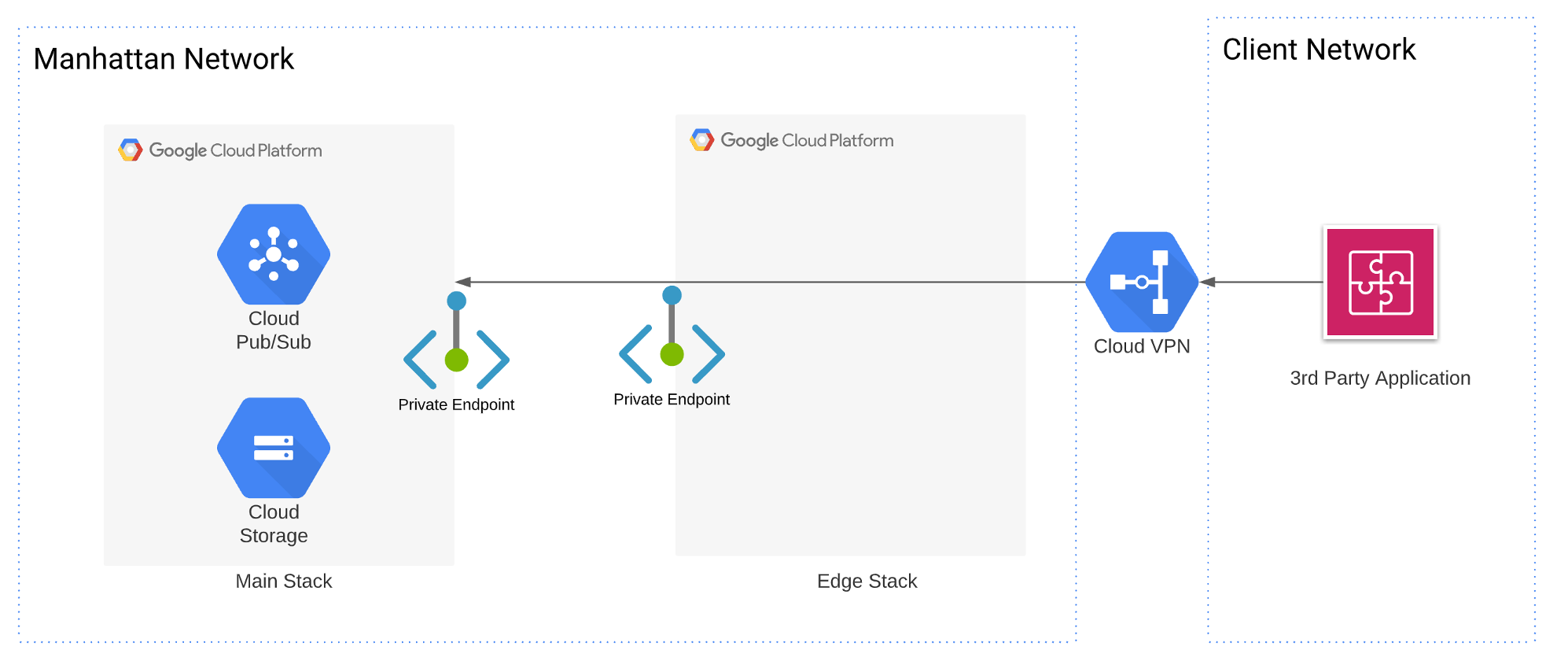

Edge Networking

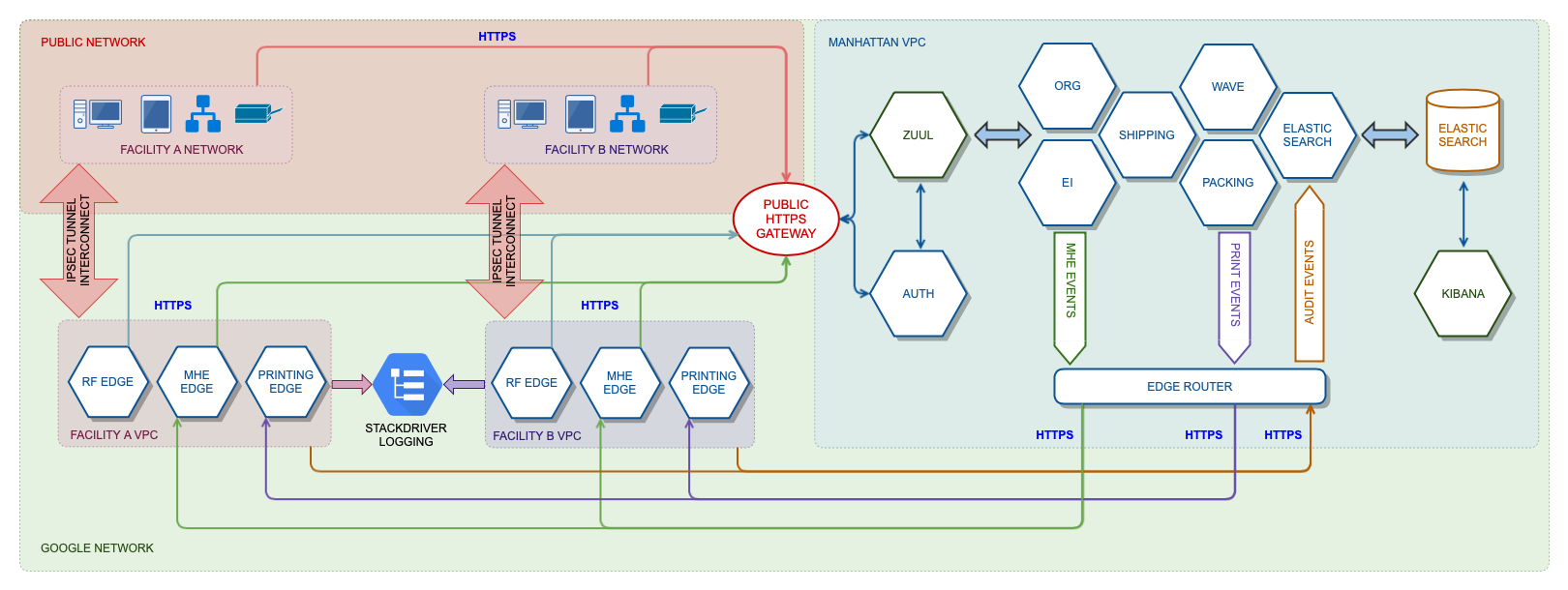

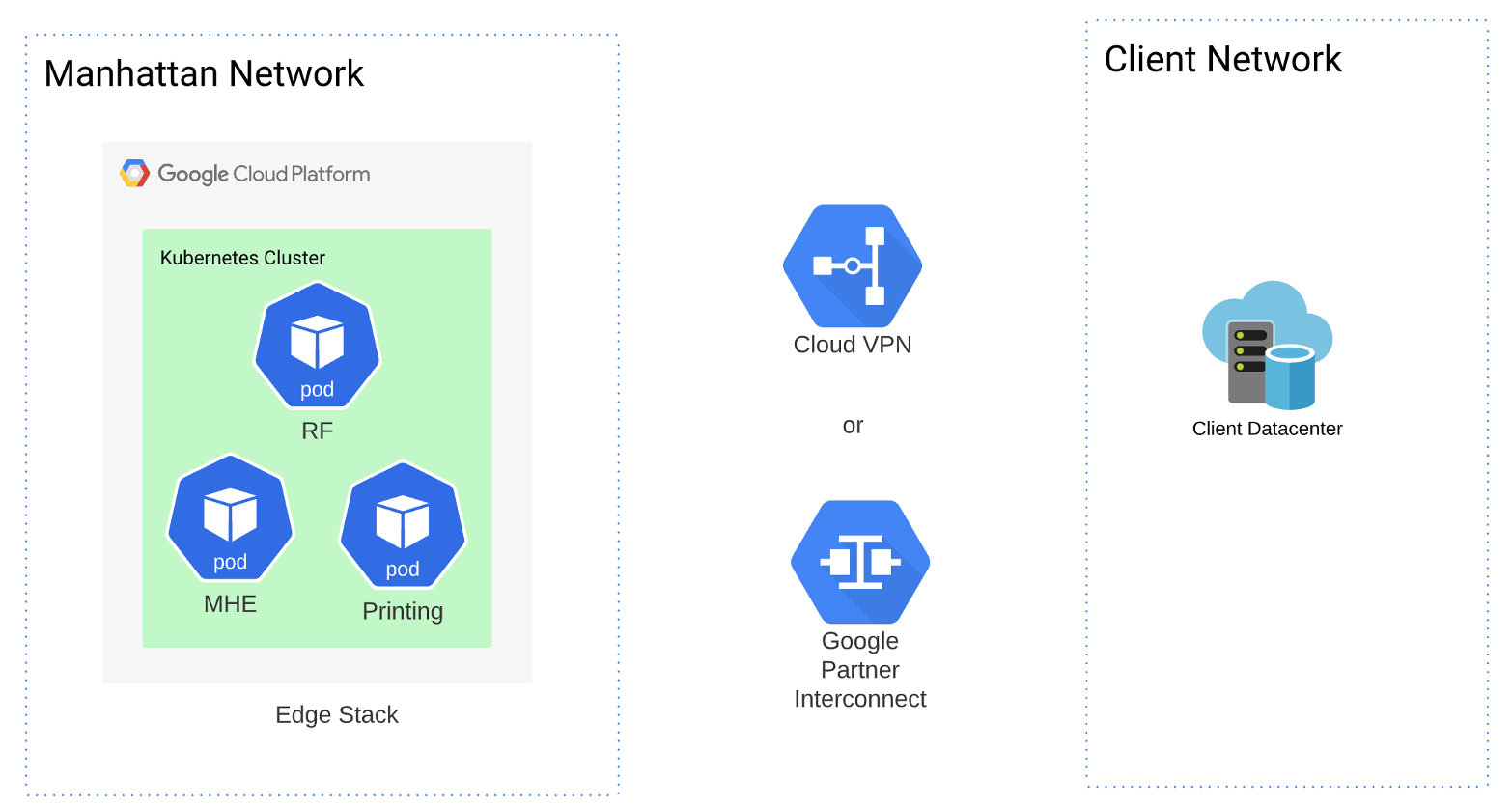

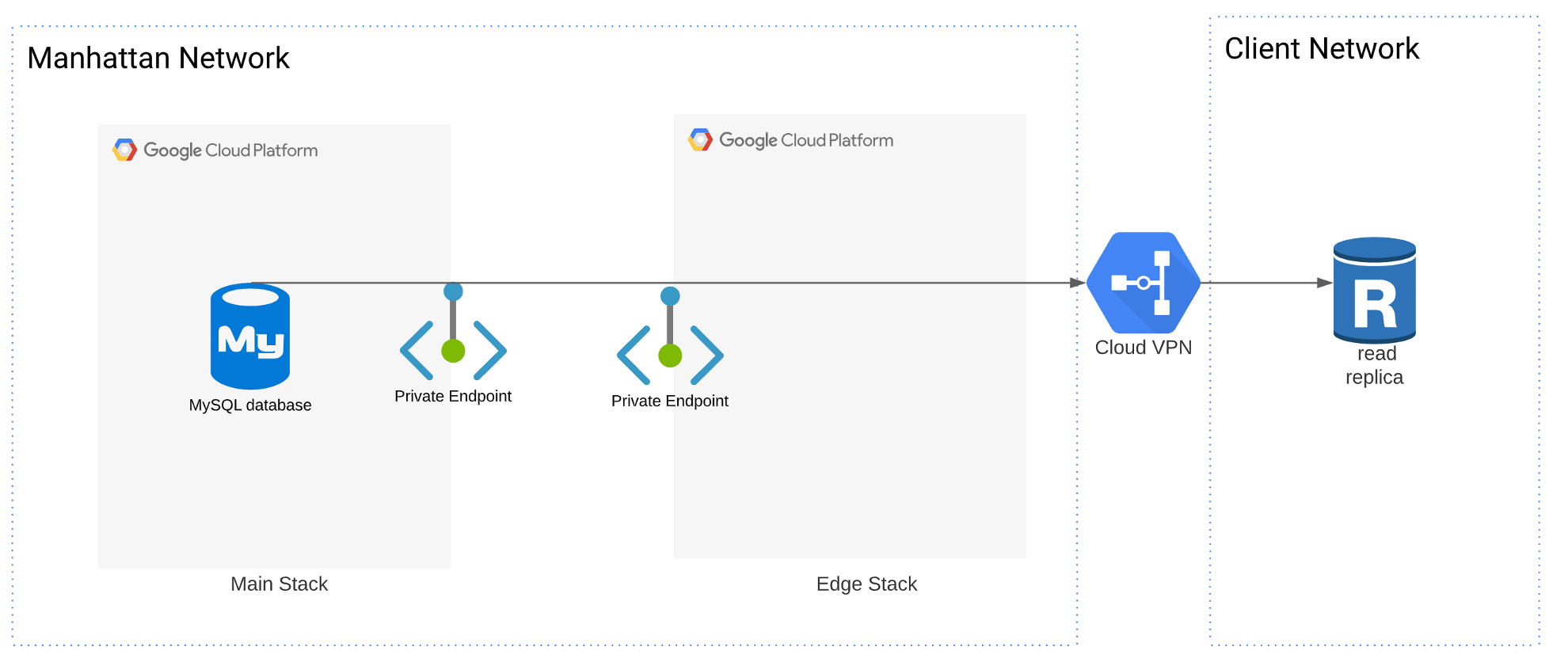

Edge services will be deployed within a Virtual Private Cloud (VPC) on GCP for each facility. This VPC will be peered with the customer’s network at the facility using VPN (IPsec tunnel) or alternative suitable peering mechanisms. Once peered, all the devices on the customer’s facilities network will be discoverable by the edge components for that facility. The following diagram shows the deployment and the network boundaries.

Connectivity to Edge Networking

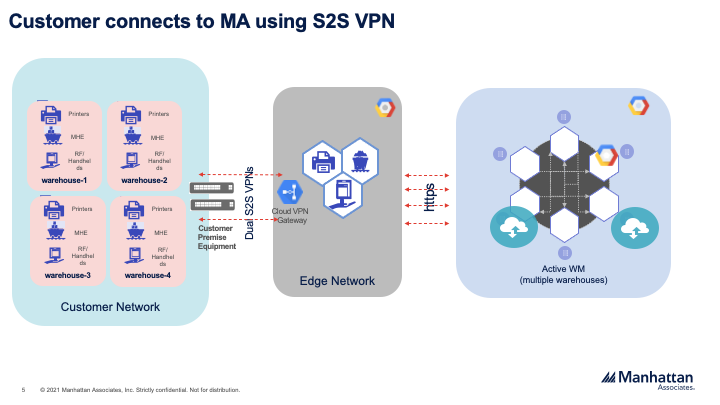

There are multiple ways to connect the Edge stack and customer network. Cloud VPN and Cloud interconnect are the two options typically used for the connectivity. Cloud Interconnect is an expensive option and generally used for gigabytes bandwidth requirement, whereas Cloud VPN is inexpensive and has enough bandwidth support needed for the SC Edge stack.

Cloud VPN

Cloud VPN securely connects the peer network to the Virtual Private Cloud (VPC) network through an IPsec VPN connection. Traffic traveling between the two networks is encrypted by one VPN gateway and then decrypted by the other VPN gateway.High-Availability is the default options we recommend for all our customers. HA VPN is a high-availability (HA) Cloud VPN solution that securely connect the on-premises network to your VPC network through an IPsec VPN connection in a single region. HA VPN provides an SLA of 99.99% service availability. HA VPN gateway to your peer gateway, 99.99% availability is guaranteed only on the Google Cloud side of the connection. End-to-end availability is subject to proper configuration of the peer VPN gateway.If both sides are Google Cloud gateways and are properly configured, end-to-end 99.99% availability is guaranteed. Default recommended routing model is dynamic and static is not supported.

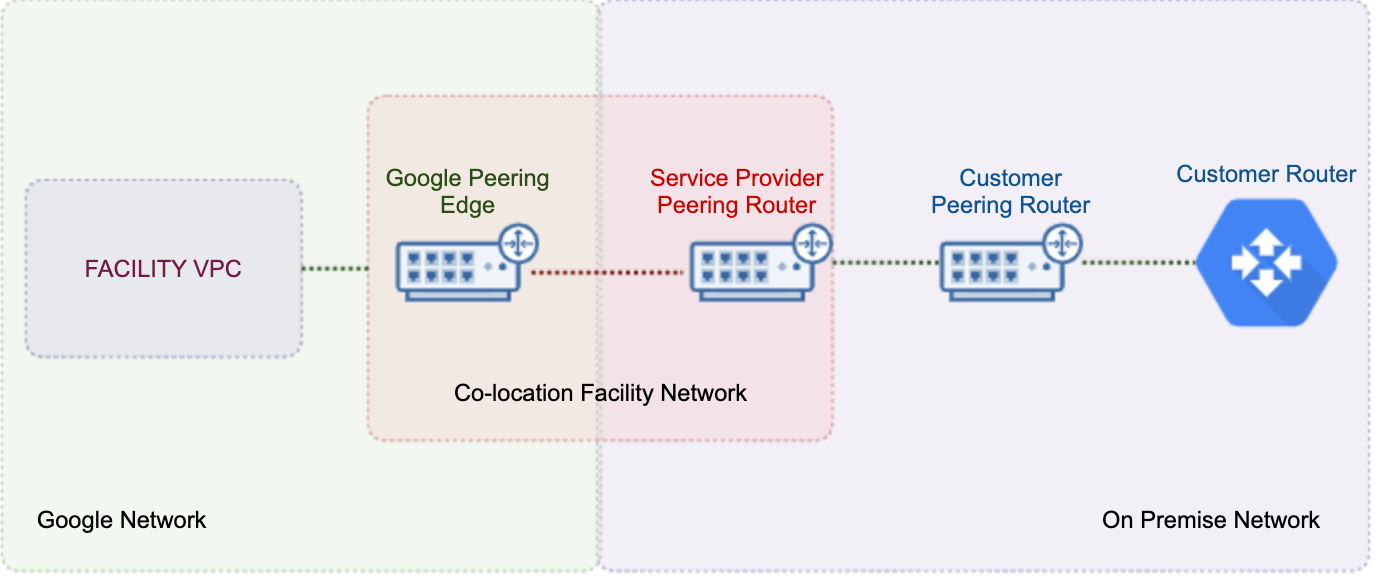

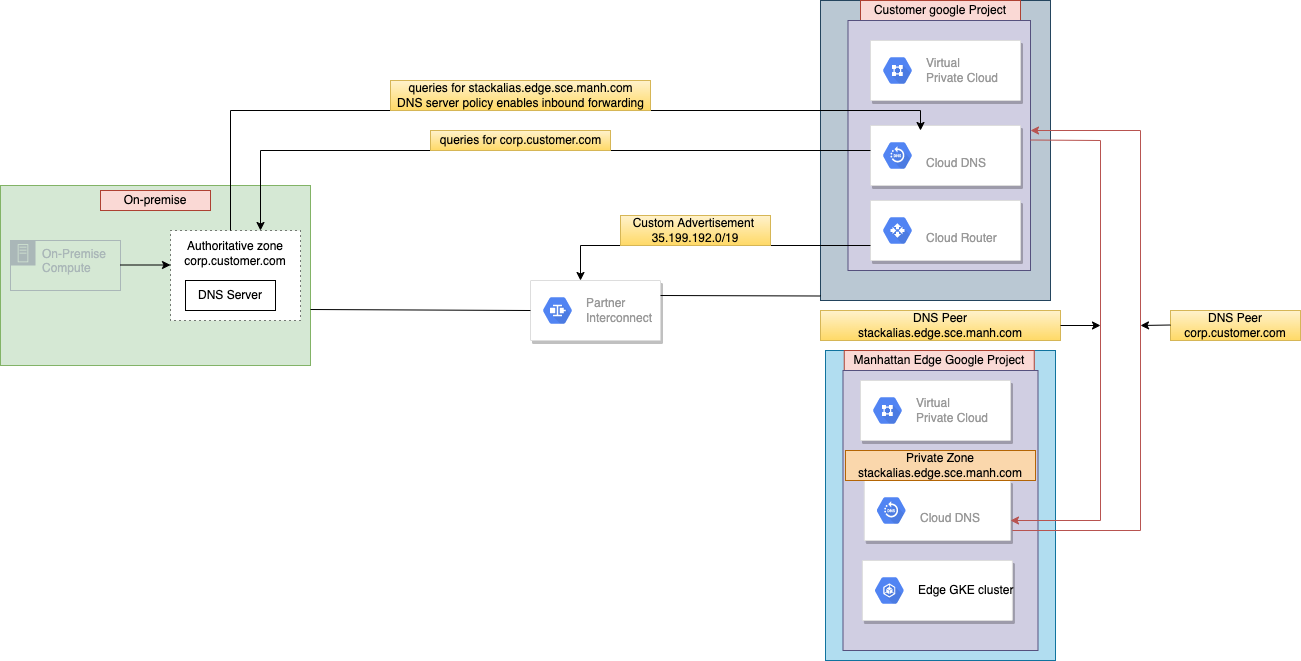

Google Partner Interconnect

Partner Interconnect provides connectivity between the customer network, and the Edge network through a supported service provider. A Partner Interconnect connection is useful if the client data center is in a physical location that can’t reach a Dedicated Interconnect collocation facility, or the data needs don’t warrant an entire 10-Gbps connection.

Service providers have existing physical connections to Google’s network that they make available for the customers to use. After a customer has established connectivity with a service provider, they can request a Partner Interconnect connection from the service provider. After the service provider provisions the connection, client can start passing traffic between the network by using the service provider’s network. Partner Interconnect supports both Layer 2 and Layer 3 connectivity. Depending on the Redundancy and SLA, we can configure Google partner interconnect on both 99.9% and 99.99% availability.

If customer has a Google cloud presence, then the VLAN adapters for the Google partner interconnect could be terminated on the customer owned Google VPC. Manhattan owned VPC then have to peered with the customer VPC for establishing the connectivity.However, if the customer doesn’t have any Google cloud presence, then the VLAN adapters for Google partner interconnect have to be created on the Manhattan VPC for establishing the connectivity.

Cloud Router

The Cloud Router is the important configuration piece as part of the VPN setup. It is used to dynamically exchange routes between the VPC network and on-premises network through BGP.

By default, Cloud Router advertises subnets in its region for regional dynamic routing or all subnets in a VPC network for global dynamic routing. New subnets are automatically advertised by Cloud Router

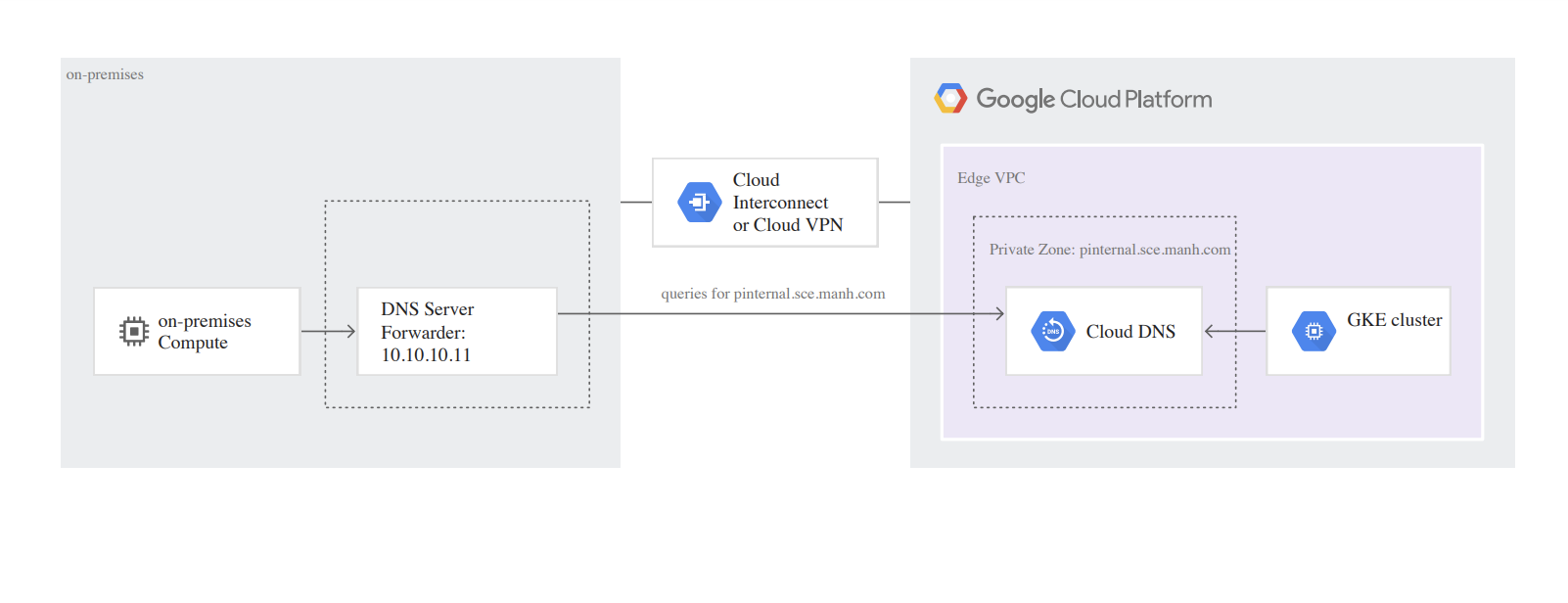

Cloud DNS

All the private calls between customer network and Manhattan VPC are using Cloud DNS service from Google for DNS resolution.

DNS peering

DNS peering lets you send requests for DNS records that come from one zone’s namespace to another VPC network. For example, Active SC customer can give Edge stack access to DNS records it manages and similarly, Edge stack can give access for SC customer to DNS records it manages. This is primarily used for all the Edge stack calls going out for Printing and outbound MHE and also the incoming calls for RF and MHE from customer network.

Secured Connectivity GCP Service Using Private Service Connect

Manhattan Active® is offered as SaaS solution to all the customers. We provide access to the application via public URL as HTTPS and an authentication for both user access and application REST calls. There are few customers who wanted to enable a private connectivity between the client network and the mother-ship stack load balancer API endpoints due to the security architecture.

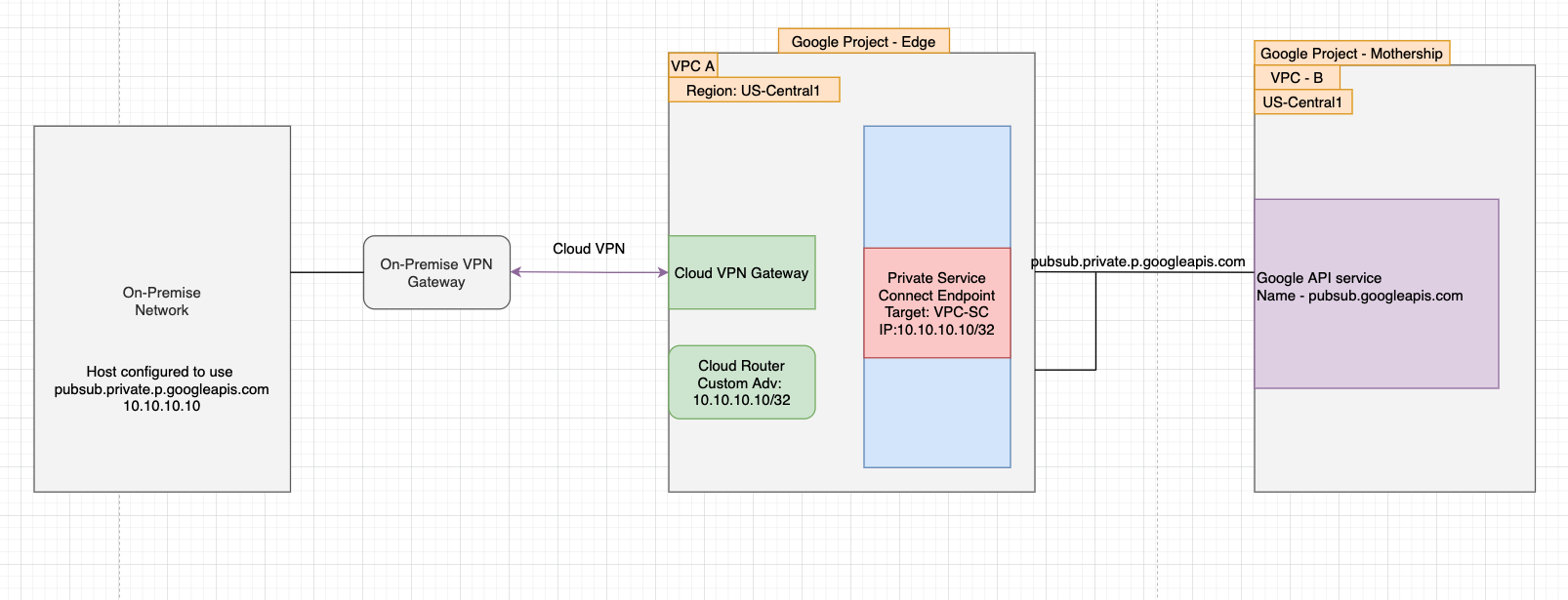

Private Service Connect to access Google APIs

All the Google API calls by default use the publicly available IP address for service endpoints such as pubsub.googleapis.com . Private Service Connect lets you connect to Google APIs using endpoints with internal IP addresses in the VPC network. This architecture is recommended for customers who are expecting all the Pubsub traffic to be private from their host systems.

Secured External Replication

Secured Data streaming

Learn More

Authors

- Giri Prasad Jayaraman: Technical Director, Manhattan Active® Platform, R&D

- Akhilesh Narayanan : Sr Principal Software Engineer, Manhattan Active® Platform, R&D

Feedback

Was this page helpful?